While various web scraping tools are available, you could learn a useful programming language like Python and write unique code that will allow you to scrape websites quickly and accurately.īut what exactly is web scraping, and what are its various uses? In this article, we’ll answer these questions and provide you with actionable steps that will get you web scraping in no time! What is Web Scraping?Īlso known as web data extraction and web harvesting, web scraping is the process of extracting data from a website. In order to gather data from publicly available sources such as websites, you’ll need to perform web scraping. According to a new report from Research and Markets it’s projected that the big data market will grow from $162.6 billion in 2021 to $273.4 billion in 2026. We will be able to connect as if we were an Iphone, Android, etc.In our increasingly data-driven world, big data is worth a lot of money. The user agent is a text string that allows servers to identify from which device we connect. In social networks is very frequent the banning of IPs. Proxies will help us to hide our IP and as a result will allow us to make more requests to the same server without being banned. If you are thinking of creating a website for your company, I will upload tips soon, stay tuned! 8. Then open a new tab and paste that link and JSON will be displayed with the data. json, you can identify the URL it came from.

Reload the web page, and go to the Network tab to see the records ending in. If the site does not expose an API and you still need its data, then look for some server-side JSON request, you may find the data you are looking for.įrom some browser, press F12 to get the developer tools window. So, just read their requirements and their data usage policy.

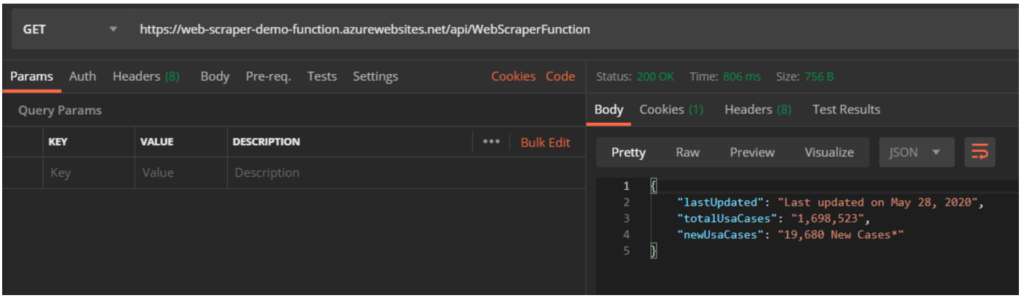

If the site has an API, you don’t need to program a scraper, unless the data you want is not provided by the API. They also provide supporting documentation. Most sites expose APIs for programmers to get the data. But it would be a total waste of bandwidth, time and storage. It’s natural to always be tempted to go after everything. Instead, program a proper crawling algorithm to make the scraper go through the most requested pages. Navigation filteringĭo not process every link unless necessary. One to accumulate links to the pages from which you need to obtain data and another to download these pages to analyze their content. For example, you could split the scraping of a large site into two. It is simpler and safer if you cut the scraper into several short phases. Therefore, you should make sure to persist the list of URLs. Without this list of URLs, a lot of time and bandwidth would be consumed in vain. It will save you if the crawler crashes when you are about to finish. Keep a table of URLs for all the links you’ve already crawled, in a table or inside a key-value store. Determine the average response time of websites, and then decide the number of simultaneous requests to the site. Therefore, it is advisable to review your requests and chain them correctly one after the other, making them more human-like. If you are sending simultaneous requests from the same IP address, they will classify you as a DoS (Denial Of Service) attack on their website, and block you instantly. Large web sites deploy services that can track the crawl on a site.

However, you can also use MySQL or any other file system caching mechanism. Storing in a key-value format like Redis is simple. So you don’t have to scrape the same thing again, in case the program crashes before finishing the process. When traversing large websites, it is always good to store the data you have previously downloaded. I have written a tutorial about it to make it easy to apply. The best known way is to use Selenium with Python to do web scraping. It can automatically retrieve the data and transform it with a usable structure for us.

Web scraping is a method for collecting, organizing and analyzing information that is spread over the Internet in a disorganized way.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed